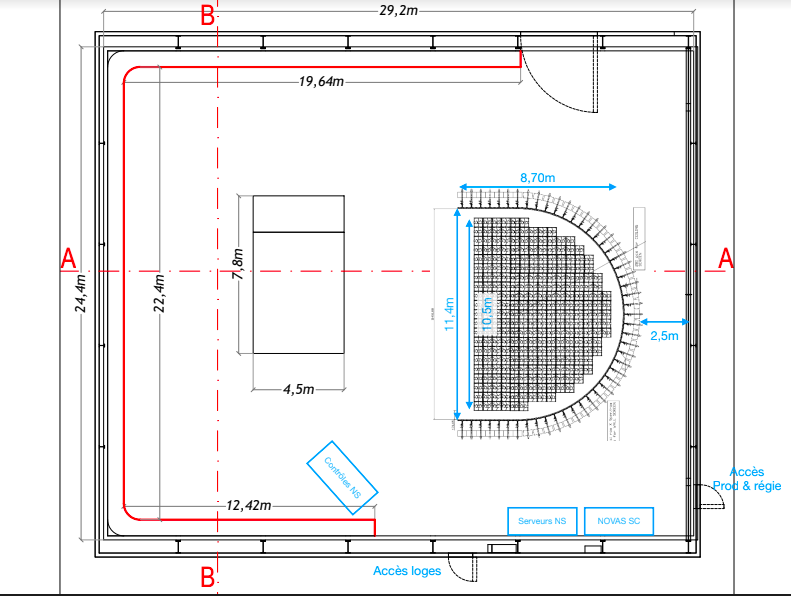

Context / environment

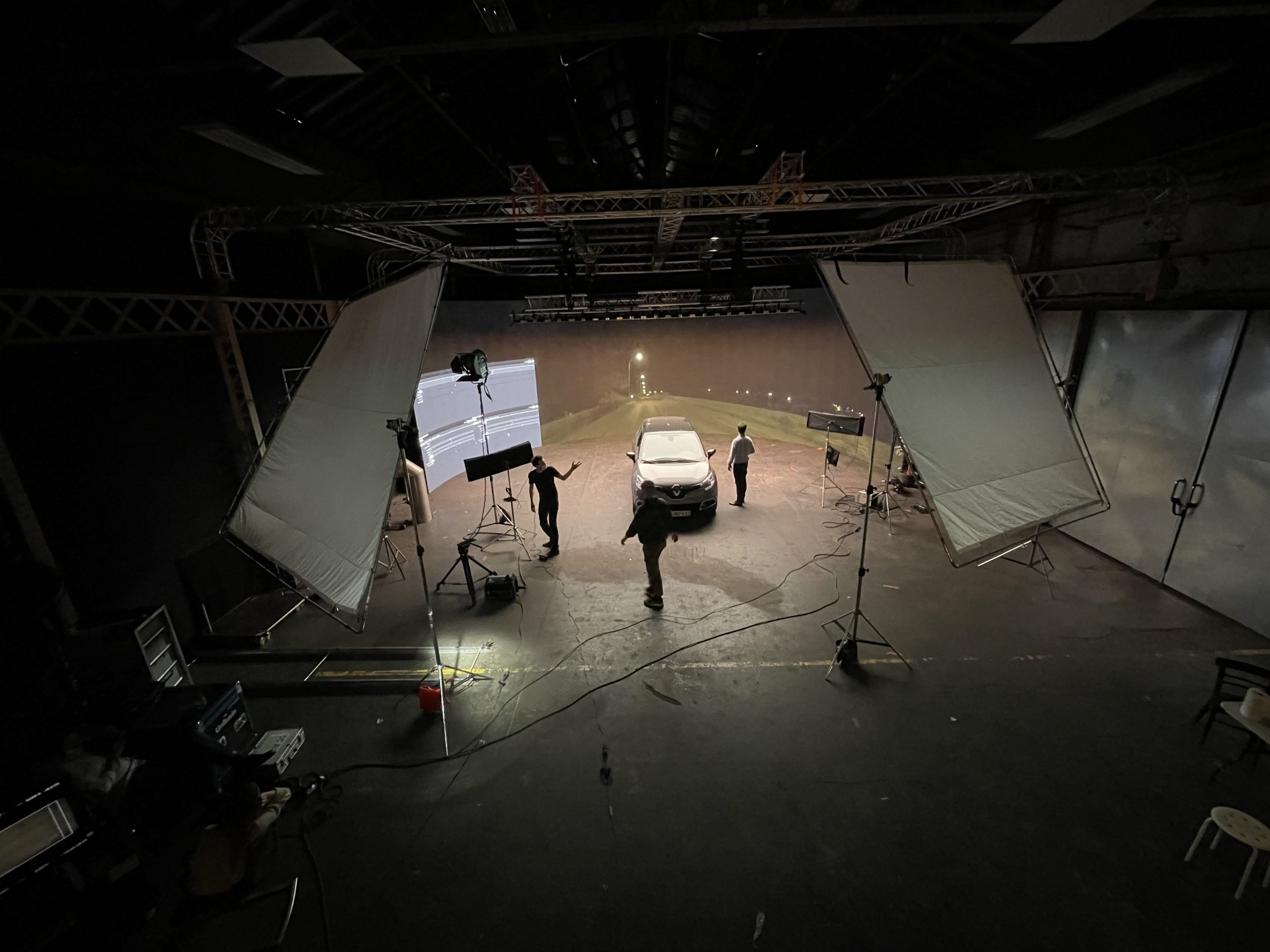

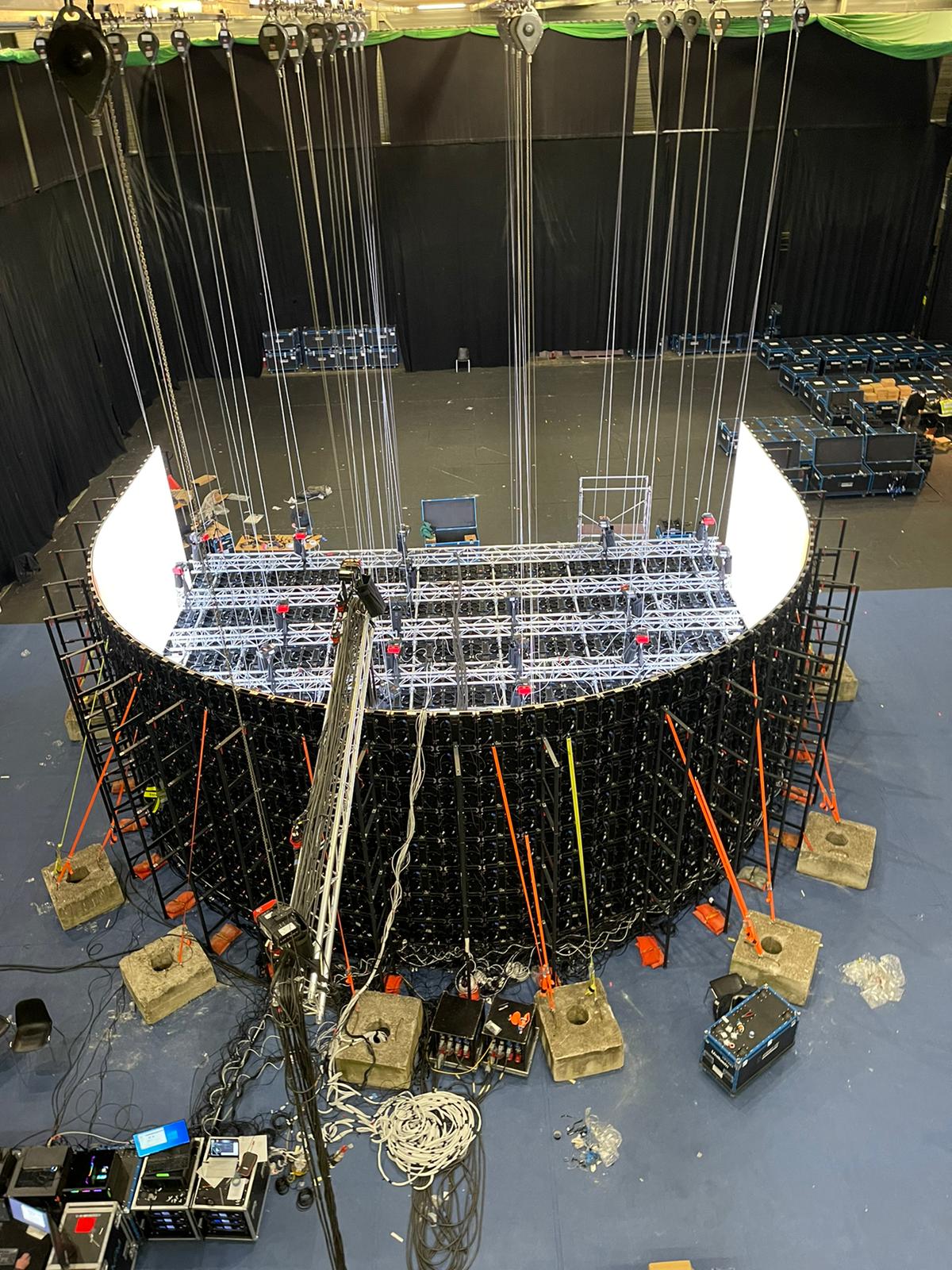

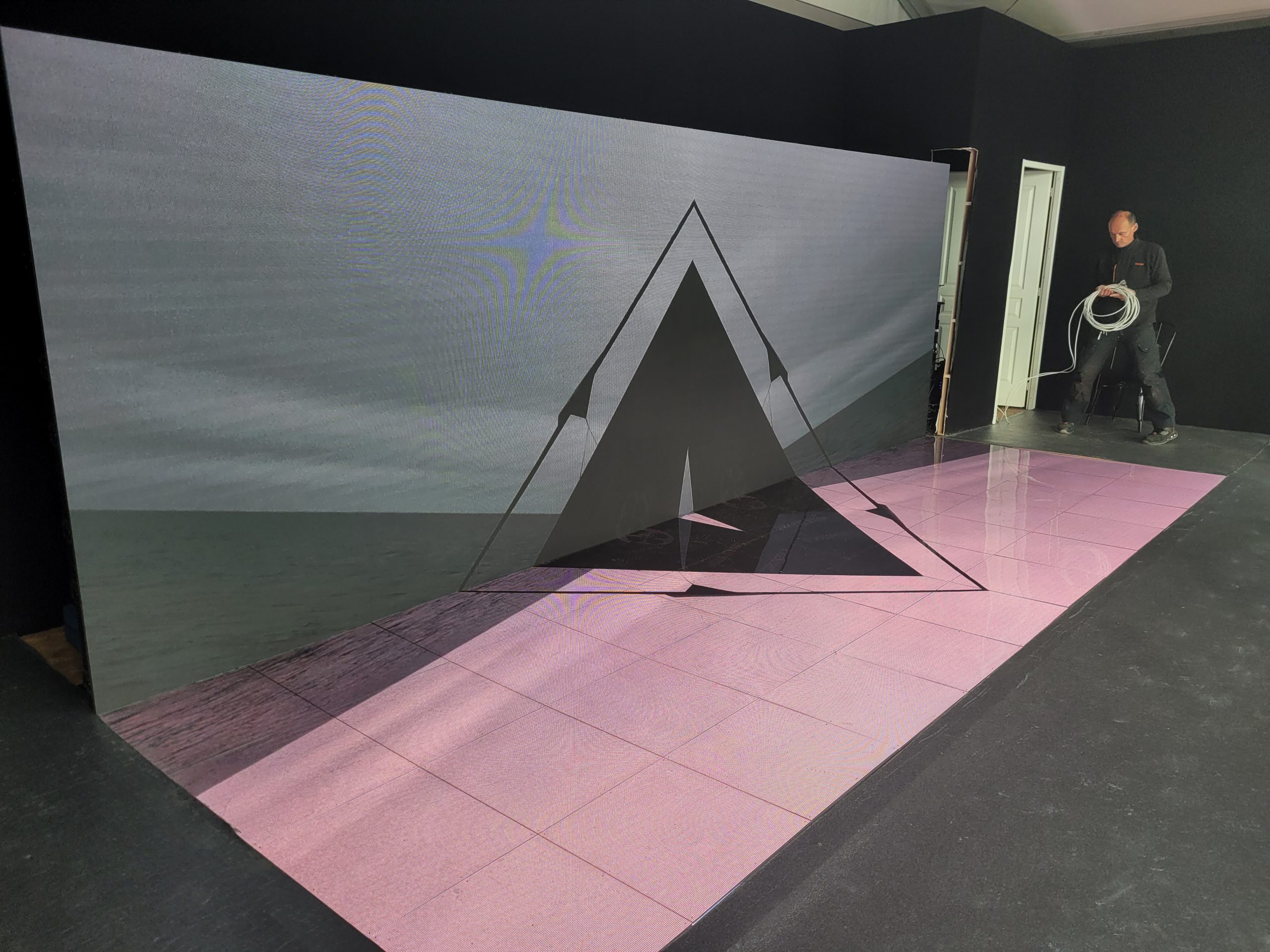

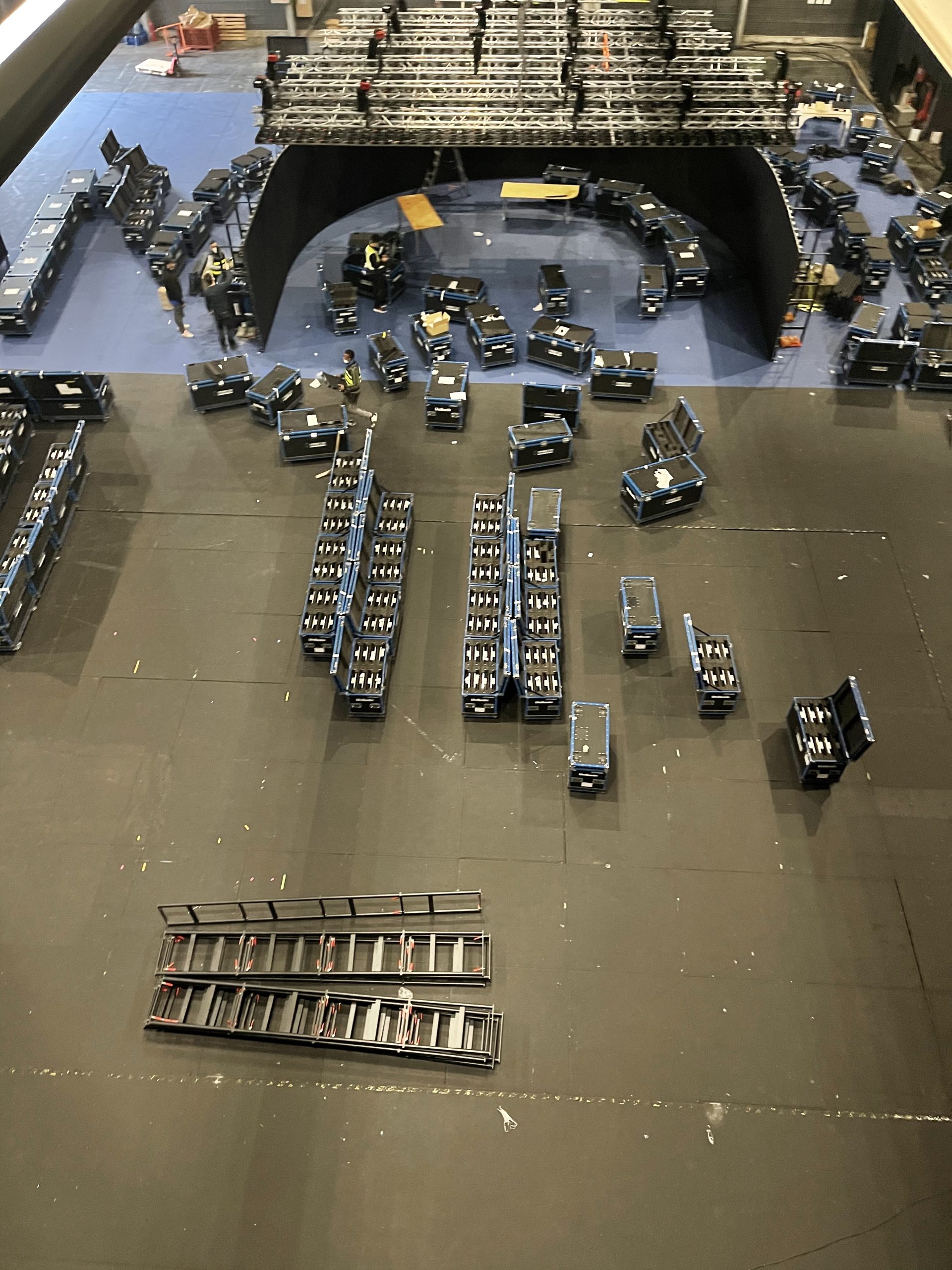

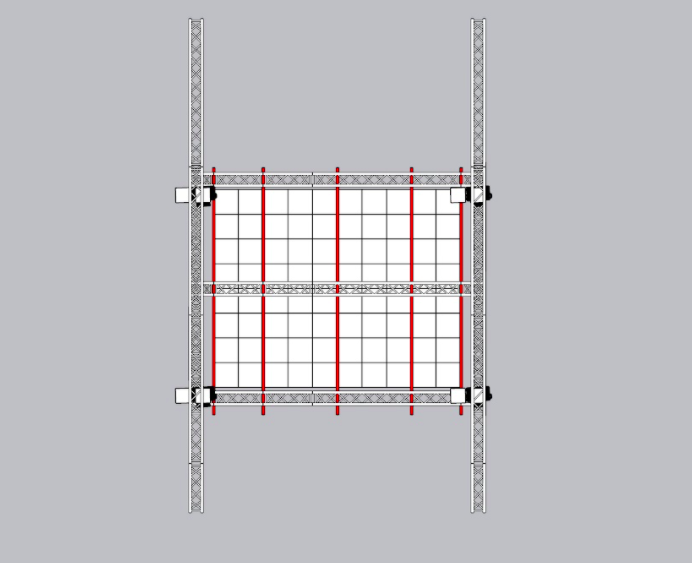

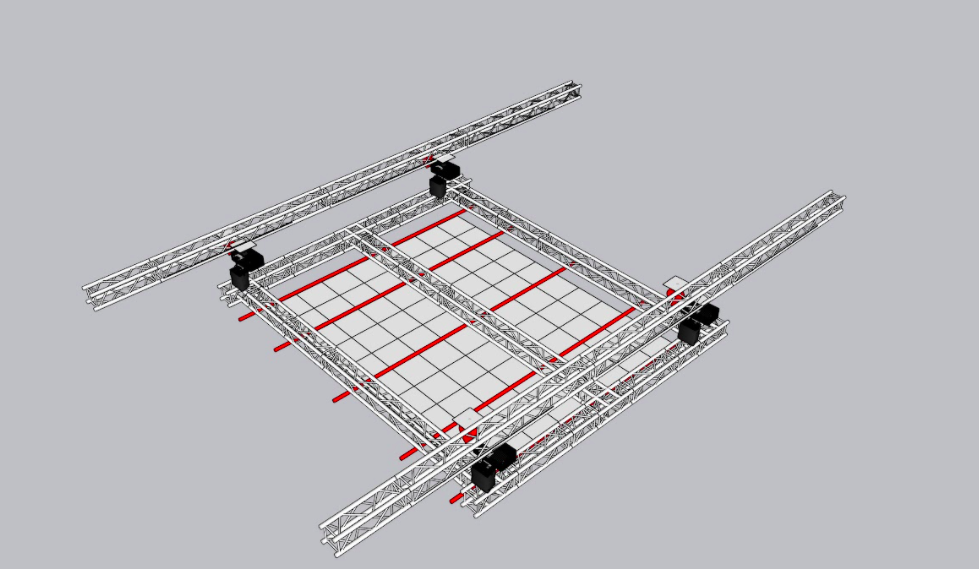

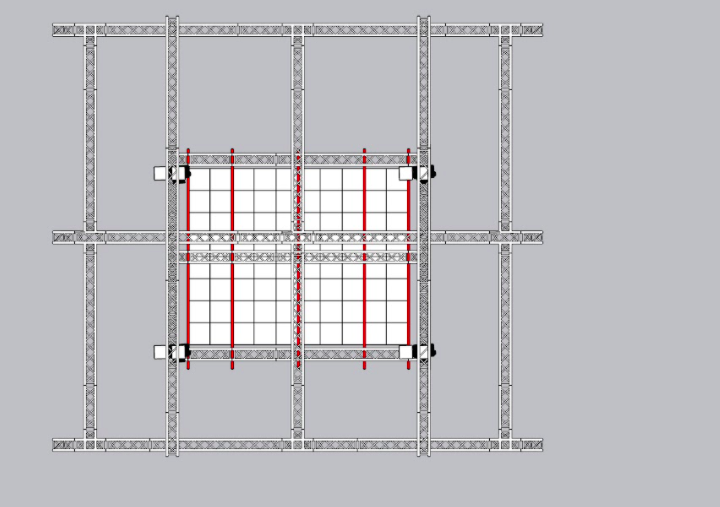

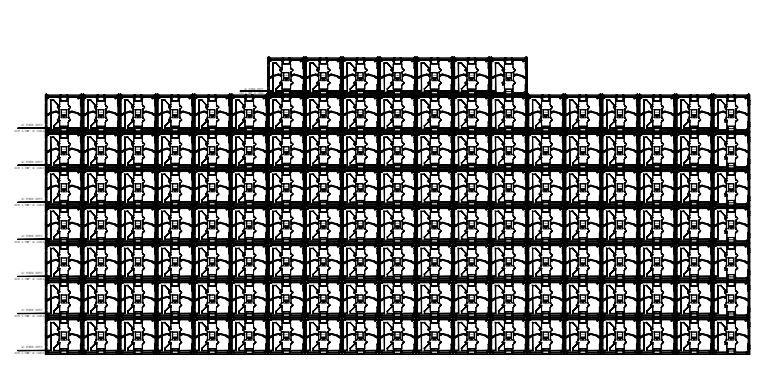

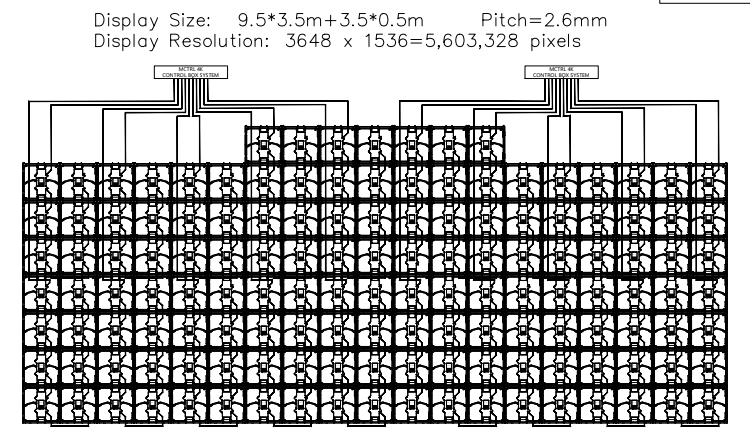

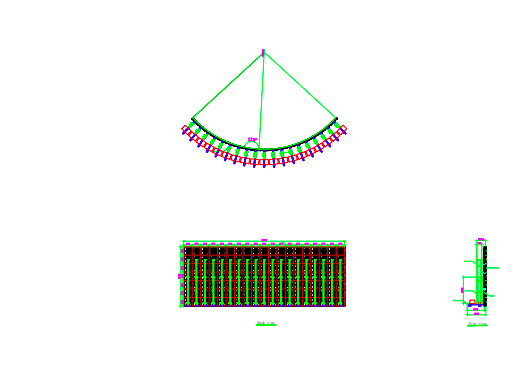

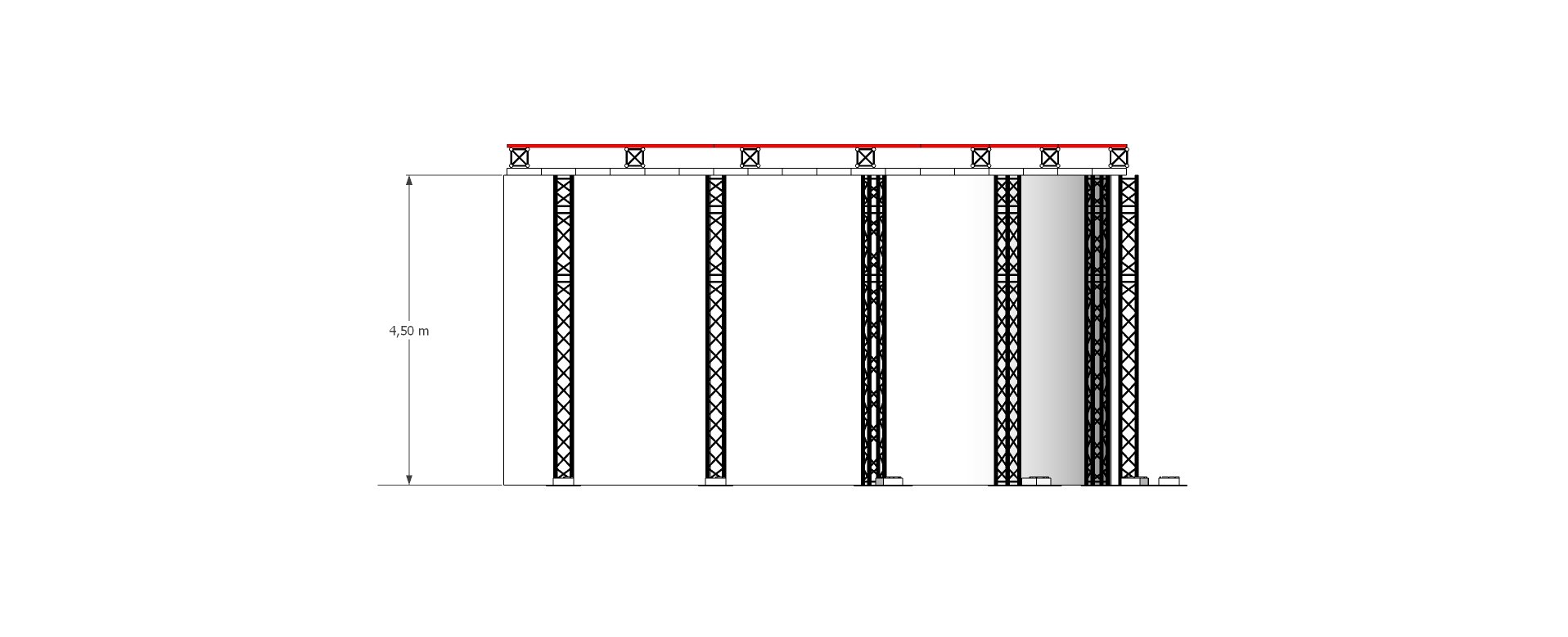

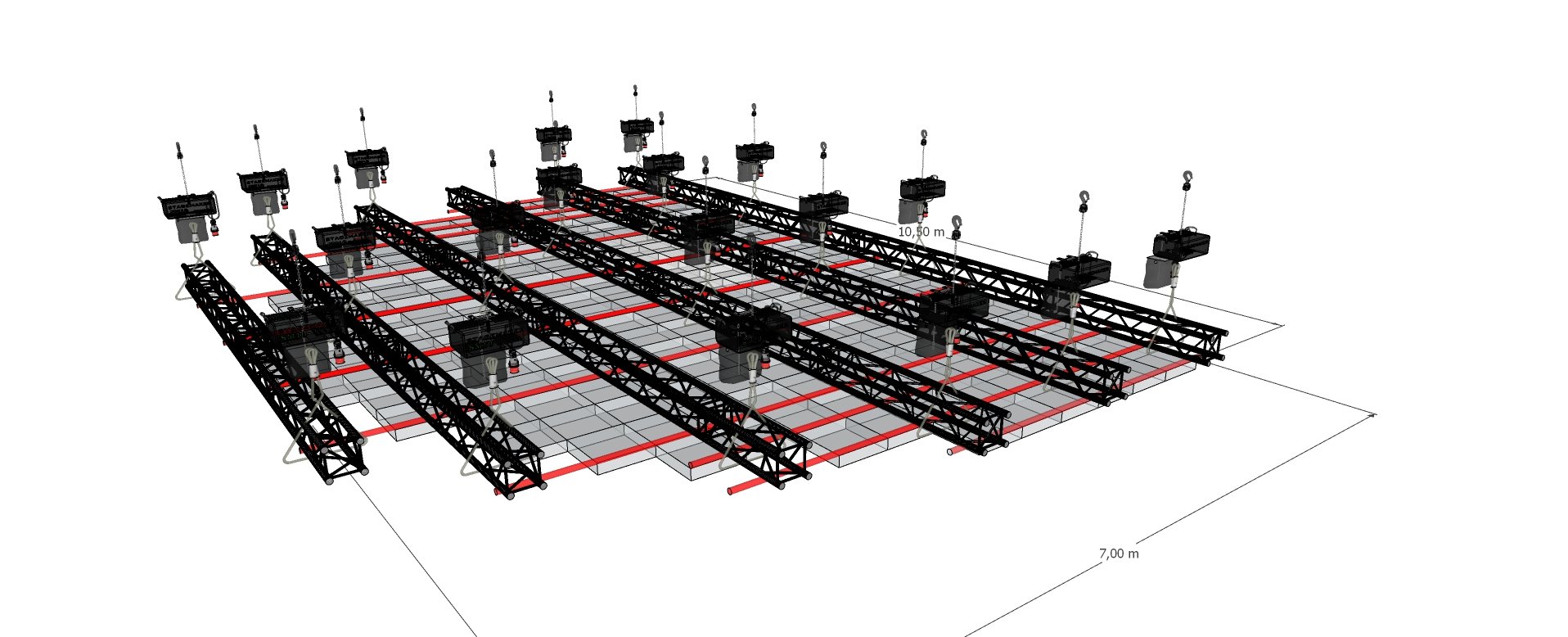

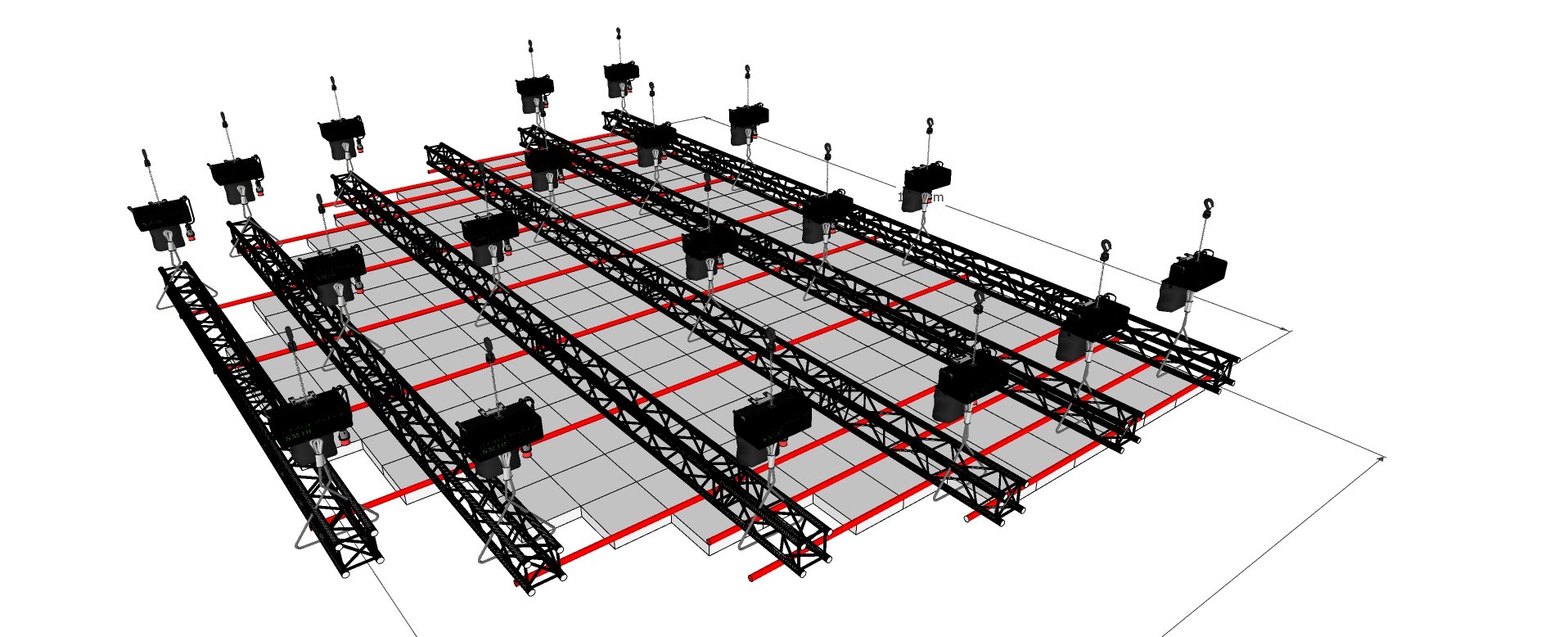

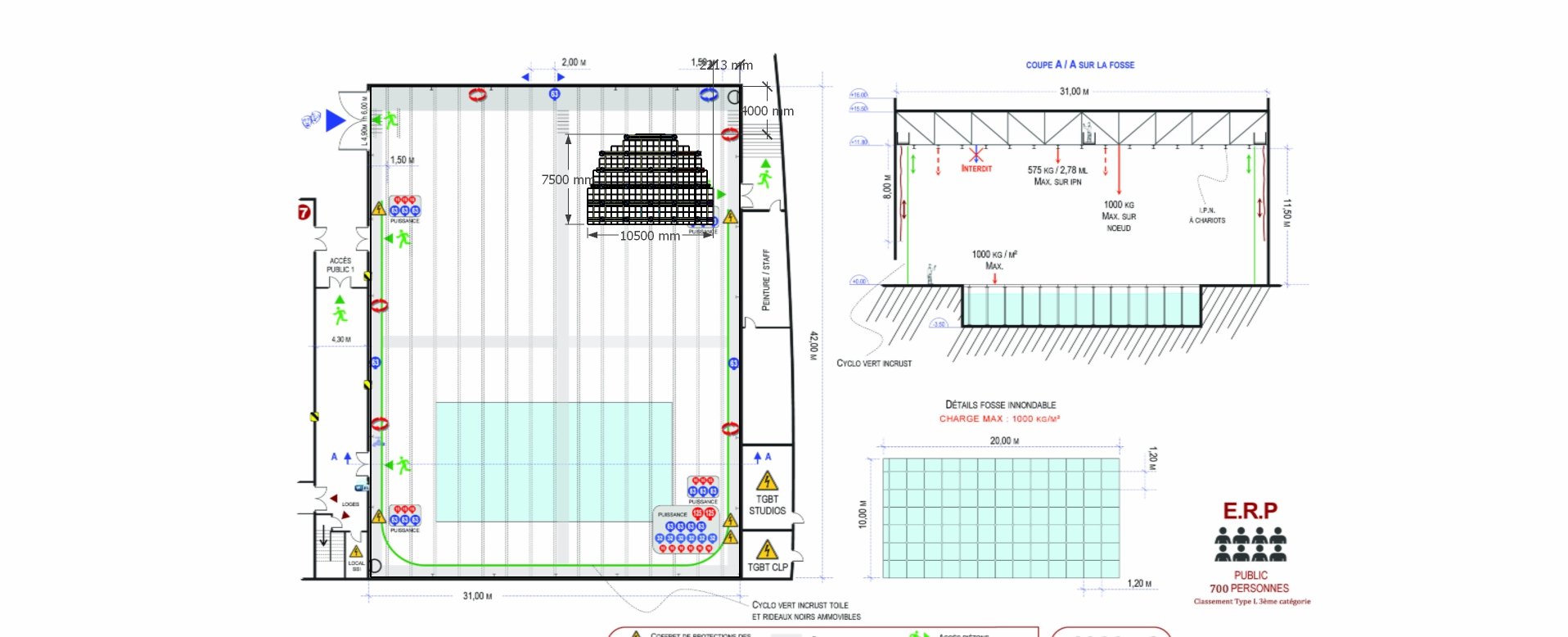

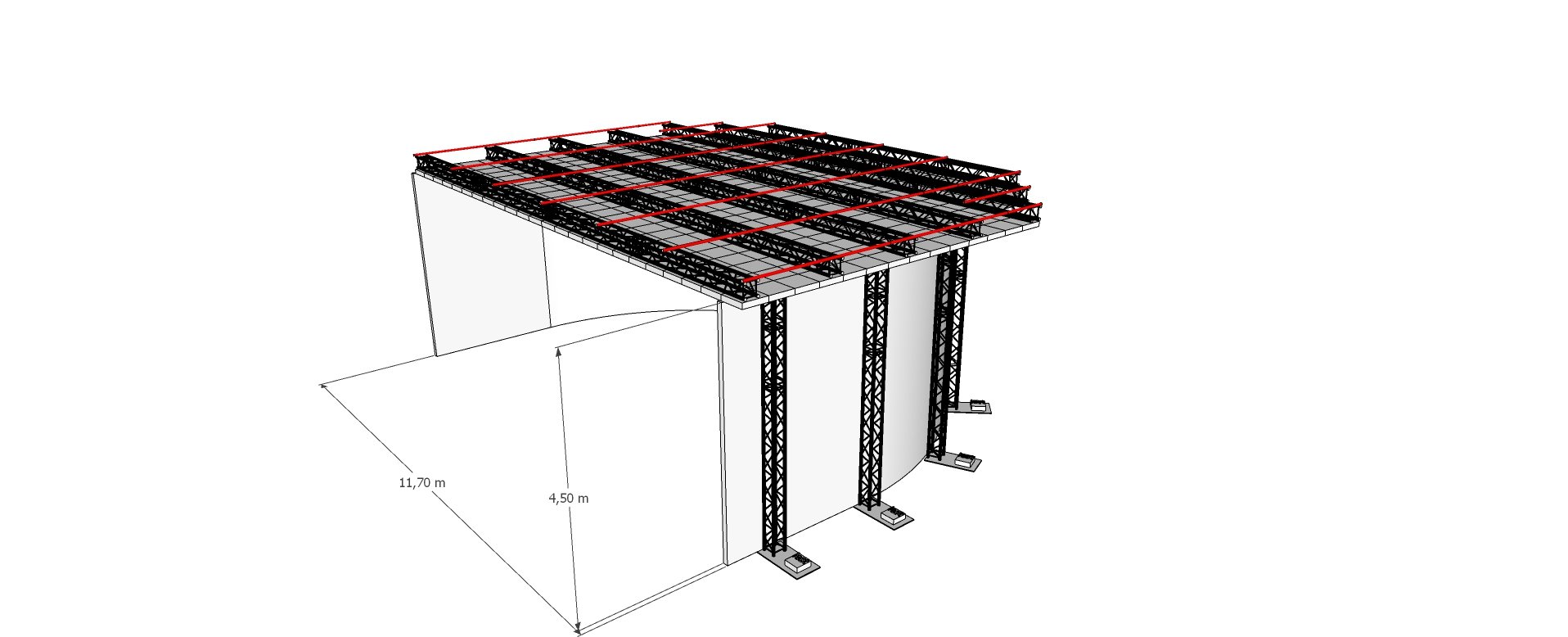

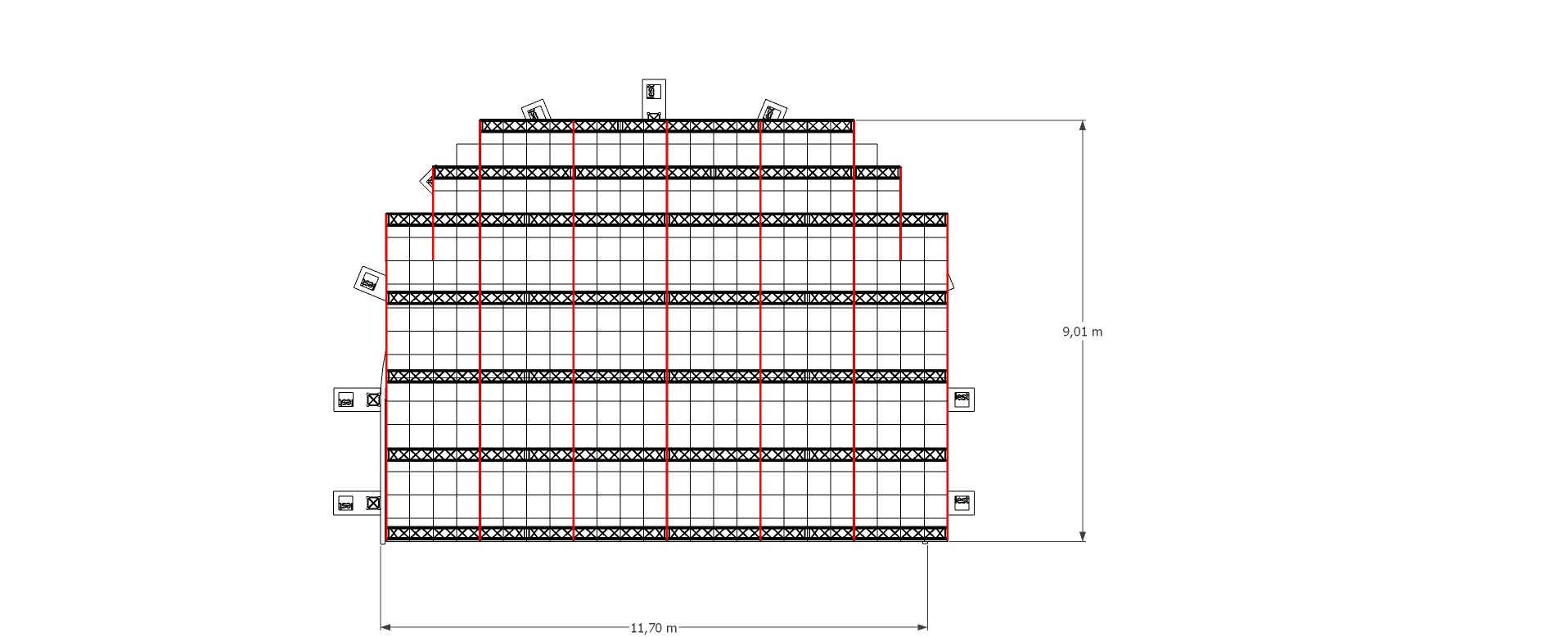

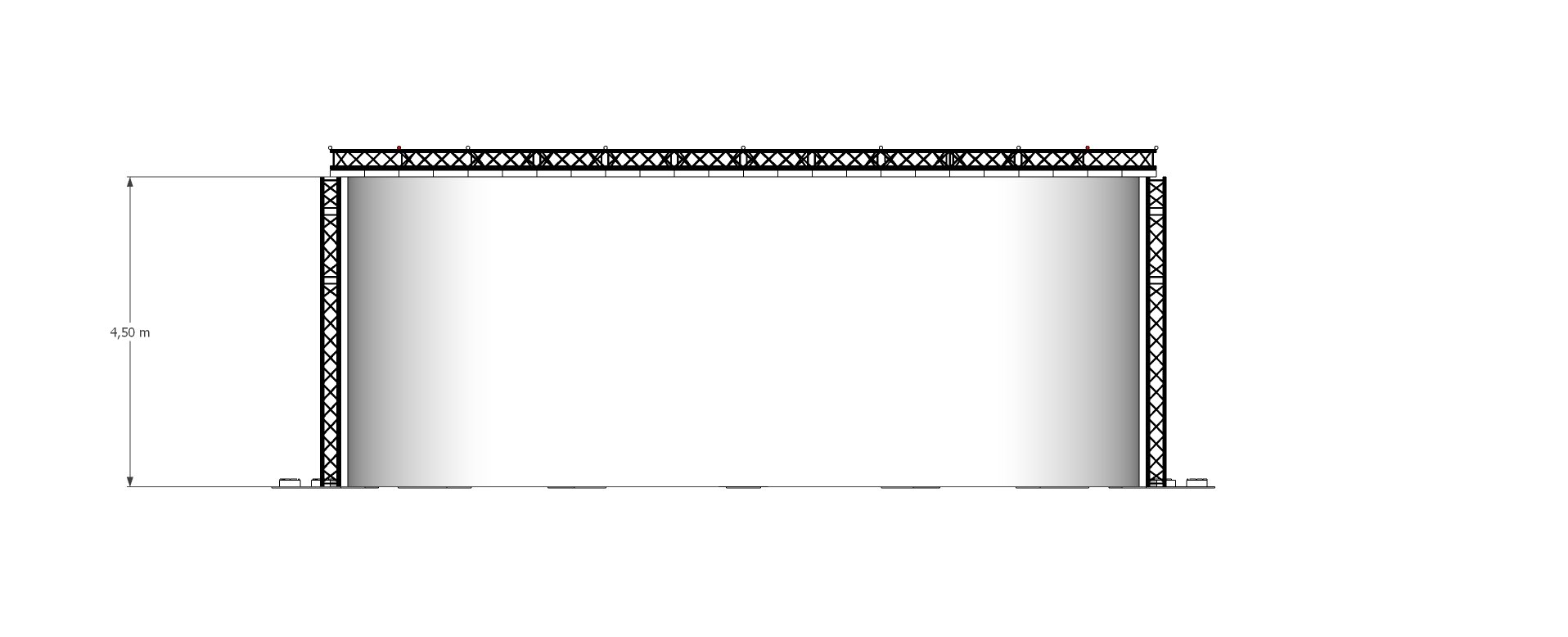

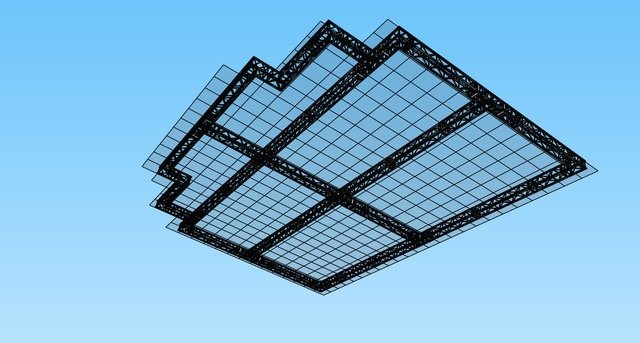

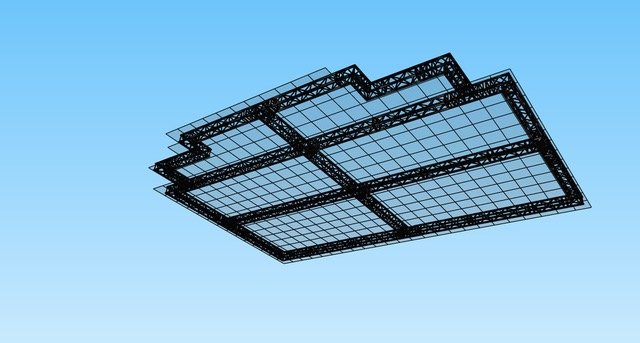

Read MoreFor the opening of our Virtual Production House (VPH) studio, we installed a 110 m² LED wall in the Studio du Kremlin at Ivry-sur-Seine, completed in just one week by our technical team, with an additional 18 m² R&D screen integrated into a larger coworking space for audiovisual professionals, creating an open environment for directors, producers, cinematographers, and all visual content creators to bring their imagination to life; since then, VPH has hosted major productions including the Undiz advertising campaign and Les Gars Sûrs directed by Louis Leterrier (known for The Transporter, The Incredible Hulk, and Now You See Me), where XR technology allowed the recording of immersive action sequences—chases, supermarket scenes, or even cliffs in the south of France—making performances more authentic while drastically reducing post-production time and costs, as the same studio could transform into multiple locations, enabling actors to live the action in real time and directors to shoot several radically different scenes without ever leaving the space.